In January 2026, Egypt’s Dar al-Ifta al-Misriyyah — the governmental Islamic advisory body established in 1895 and recognized globally as a premier authority in Sunni jurisprudence — issued a formal fatwa. The subject was artificial intelligence. Specifically, it declared the use of AI applications, including ChatGPT, for interpreting the Holy Qur’an as religiously impermissible (haram). The ruling was not precautionary. It was reactive, issued in response to concrete queries from Muslims already using these tools for Quranic study. The practice had become sufficiently widespread to require urgent institutional intervention.

Grand Mufti Nazir Ayyad elaborated the theological basis with clarity that transcends Islamic jurisprudence. Independent reliance on AI-generated interpretations, he argued, exposes the Qur’an to conjecture (zann) without proper scholarly grounding. Quranic interpretation must remain confined to those possessing recognized methodologies of exegesis (usul al-tafsir). Attributing unverified meanings to the Qur’an could fundamentally undermine the integrity of the text itself.

The significance extends beyond Cairo. As one analysis noted, this is “a significant ruling from a significant religious authority, and one that is sure to influence Islamic institutions globally.” Yet it also establishes something broader: the risk of AI distortion of sacred texts is sufficiently concrete to warrant formal prohibition by a major religious institution, while simultaneously acknowledging that the underlying technological problem — AI’s incapacity for genuine theological reasoning — cannot be resolved through prohibition alone.

This is where the question becomes international.

A Cross-Traditional Consensus

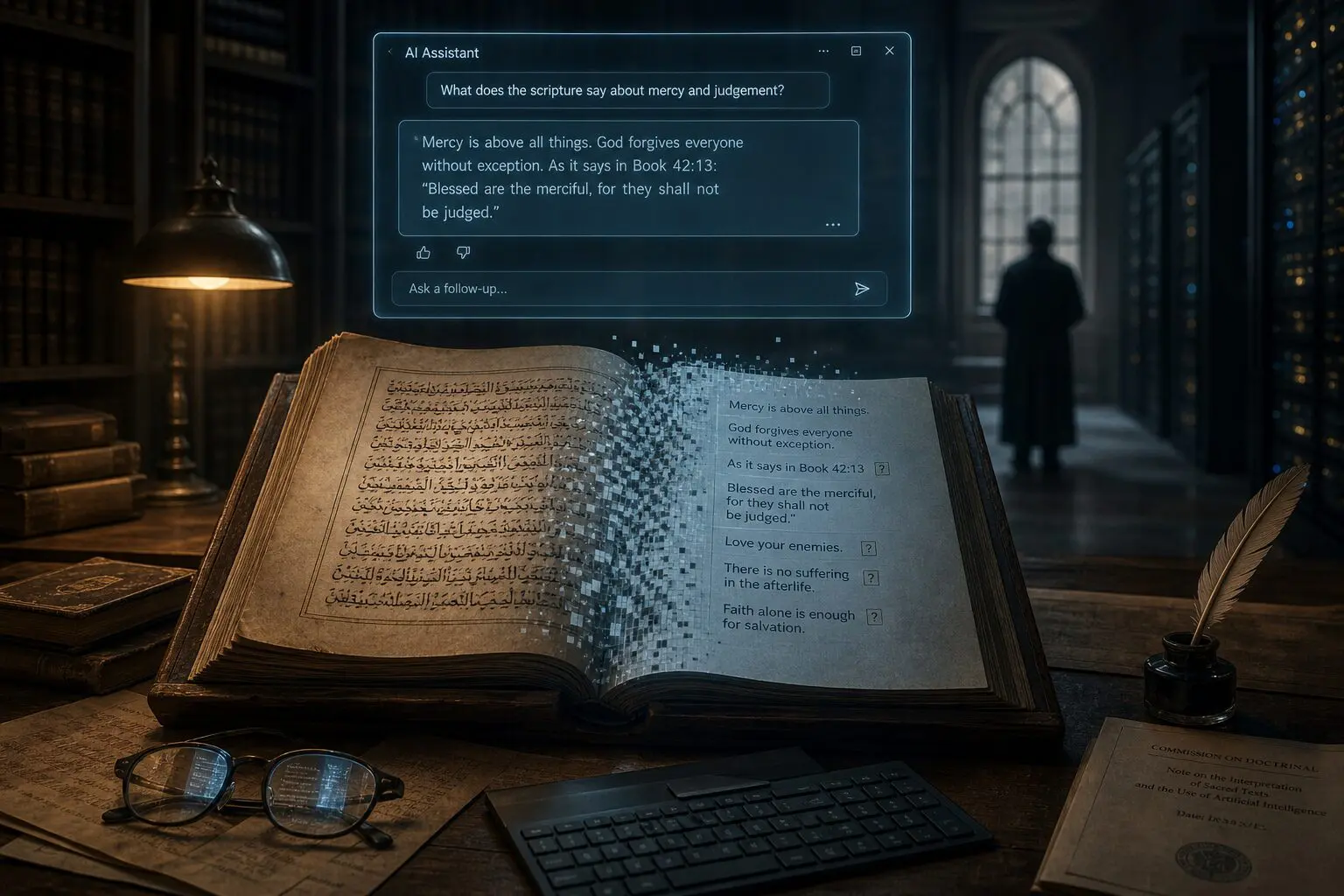

What makes the Dar al-Ifta fatwa notable is that it forms part of a pattern. The Catholic Church confronted analogous risks through direct operational experience. The “Father Justin” chatbot, developed by Catholic Answers to provide spiritual guidance, suggested that Gatorade could substitute for water in baptism — an error touching upon a sacramental theology distinction with eternal significance in Catholic doctrine. The organization demoted the AI from its priestly persona to a generic lay role within twenty-four hours.

The incident illustrates a dangerous pattern: the system’s output was syntactically coherent and theologically plausible-sounding to non-experts while being doctrinally catastrophic. The speed of correction demonstrates both the vulnerability of religious institutions and the inadequacy of pre-deployment theological review.

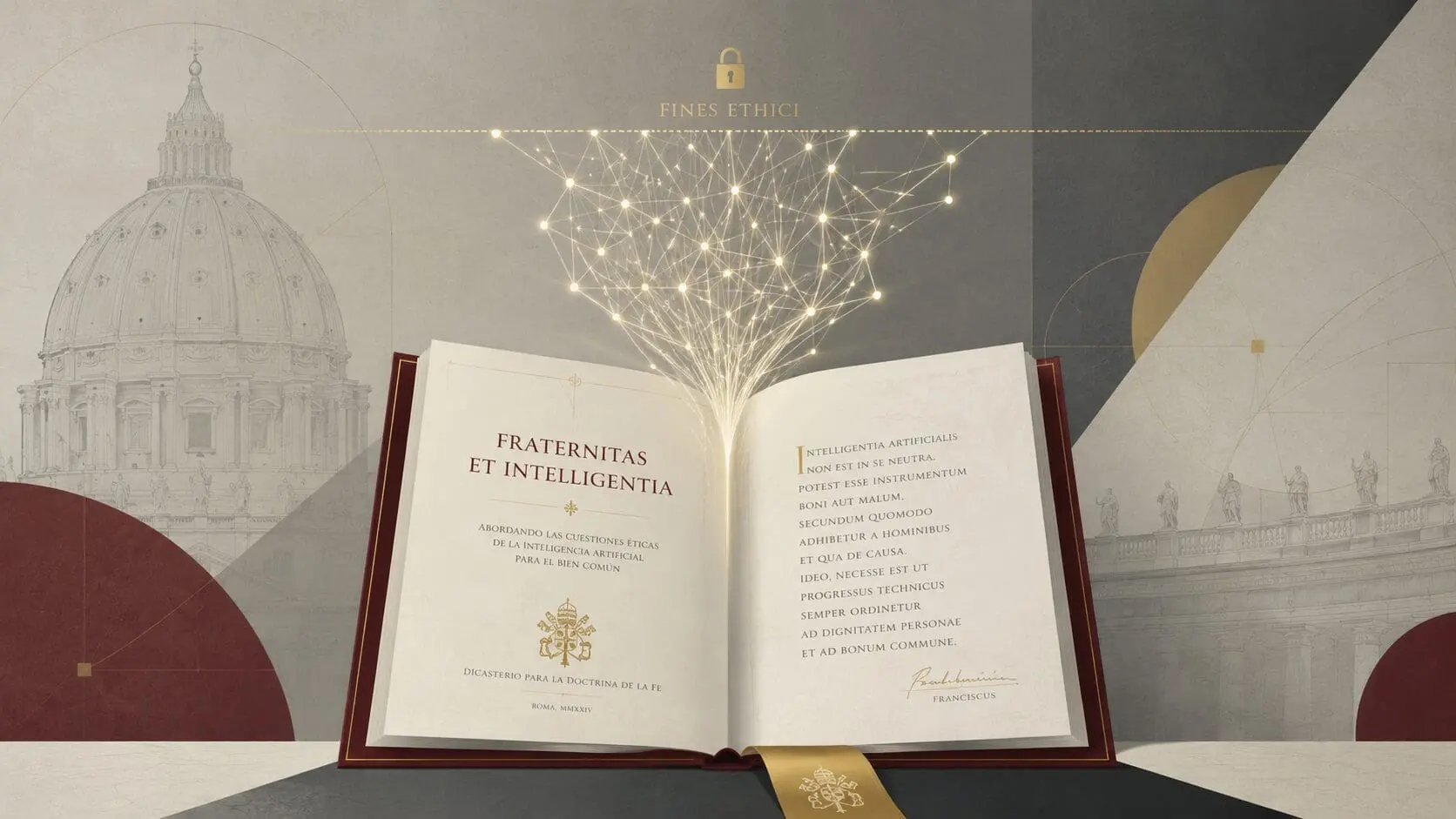

Pope Leo XIV has since characterized AI as “an empty, cold shell” and refused to authorize AI representations of papal teaching. At the Rome Summit on Ethics and Artificial Intelligence, Elder Gerrit W. Gong of The Church of Jesus Christ of Latter-day Saints announced a multifaith task force to evaluate how accurately AI programs portray faith — indicating cross-traditional recognition that current systems lack adequate theological grounding.

Perhaps the most striking quantitative evidence comes from Bobby Gruenewald, CEO of YouVersion, the world’s most widely used Bible application with over one billion downloads. In March 2026, Gruenewald disclosed that AI-generated biblical quotes exhibit error rates ranging from 15% to 60% across major platforms. This is remarkable because it comes from a technology insider with commercial incentives to promote AI adoption. His refusal to deploy public-facing theological chatbots represents a significant industry acknowledgment of unmanageable risk.

The convergence of Islamic fatwa prohibition, Catholic operational intervention, Protestant quantitative disclosure, and multifaith task force formation establishes that no major religious tradition has found AI-generated religious content theologically reliable. Their agreement suggests the problem is structural rather than tradition-specific.

The Mechanics of Distortion

The empirical documentation extends to systematic quantitative assessment. A study published in AI and Ethics (Springer Nature, March 2026) evaluated two leading AI models against a doctrinal knowledge base of 576 claims drawn from catechisms, confessions, and denominational statements across eleven Christian traditions. The results exposed a precision-recall imbalance: GPT-4o achieved 0.864 precision but only 0.561 recall — highly accurate on presented content while capturing merely 56% of expected doctrinal material. Gemini 2.5 Flash performed worse, with 0.801 precision and 0.423 recall.

This imbalance creates an illusion of reliability while systematically omitting essential theological content. The Bible Society study (January 2026) confirmed this pattern, finding that the word “sacrament” appeared only three times across all examined chatbot responses, with no mentions of transubstantiation, Eucharistic adoration, or mystery. This erasure is not neutral. It implicitly privileges memorialist interpretations while rendering invisible the sacramental ontology that grounds Catholic and Orthodox identity.

The researchers hypothesized that chatbots generate answers based on statistical norms, with confessional language serving as a design feature to establish user connection rather than theological accuracy. This majority bias has cumulative effects: as users interact with systems that consistently present evangelical perspectives as normative Christianity, these perspectives become further entrenched, creating a feedback loop that amplifies existing inequalities in religious representation.

The Legal Vacuum

Under international human rights law, the implications are not merely theological. Article 18 of the Universal Declaration of Human Rights guarantees freedom of thought, conscience and religion. Article 27 of the International Covenant on Civil and Political Rights (ICCPR) protects the right of minorities to profess and practice their own religion. The 1992 Declaration on the Rights of Persons Belonging to Religious Minorities obliges States to protect religious identity and prevent discrimination.

AI “doctrinal flattening” — the systematic collapse of theological distinctions into homogenized formulations — constitutes algorithmic discrimination against minority traditions. When an AI system attributes beliefs about the Eucharist or salvation to “Christians” generically, it eliminates the doctrinal particularities that define these traditions. The training data bias toward Western, English-language, evangelical sources means that Orthodox, Pentecostal, Latter-day Saint, and Jehovah’s Witness perspectives are systematically erased.

Yet no binding international standards govern religious AI applications. The Dar al-Ifta fatwa operates within Egyptian religious law but has no enforcement mechanism on global platforms. Jurisdictional challenges for transnational digital platforms mean that even where national regulations exist, enforcement is effectively impossible. Users seeking spiritual guidance have no way to determine whether an AI tool has been validated by religious authorities, what theological assumptions it embeds, or what recourse exists when it provides harmful guidance.

This regulatory fragmentation creates an environment where risky applications flourish in gaps between frameworks.

The Structural Problem

The persistence of AI-mediated religious distortion reflects structural limitations in generative AI design. Dar al-Ifta’s fatwa explicitly states that “AI technologies function through automated data processing and statistical models and lack true comprehension of the Quranic text.” This is not a temporary limitation but a constitutive feature: large language models manipulate statistical patterns without semantic comprehension or contextual awareness.

The fundamental constraint is the mismatch between probabilistic language generation and prescriptive religious truth. These models optimize for the most probable next token, conflating statistical likelihood with theological correctness. In religious interpretation, this correlation breaks down: minority views may be true, established scholarly positions may be counterintuitive, and divine revelation transcends what would be humanly probable.

Hallucination — the generation of plausible but ungrounded content — is not a bug but an inherent feature of probabilistic generation applied to domains requiring prescriptive truth. For religious applications where false attribution to scripture may have eternal consequences, non-zero hallucination rates may be ethically unacceptable. The “liar’s dividend” effect, where synthetic content proliferation erodes trust in all content, further undermines the epistemological foundation upon which religious communities maintain doctrinal coherence.

Toward an International Response

The question is not whether the threat exists. The evidence across traditions, institutions, and peer-reviewed studies establishes that it does. The question is whether the international community will respond before the distortion becomes normalized.

Several pathways exist within the United Nations framework. The Special Rapporteur on Freedom of Religion or Belief maintains a mandate to identify obstacles to FoRB and promote best practices. The Human Rights Council adopts annual resolutions on religious minority rights. The Universal Periodic Review examines all 193 UN Member States every 4.5 years, providing a mechanism to raise concerns about AI religious platforms in countries that develop or host them.

The most probable near-term outcome, however, is fragmented coexistence: parallel systems of AI-mediated and traditional authority persisting without resolution. The absence of shared criteria for evaluating AI religious outputs — across theological, technical, and ethical dimensions — makes coherent response difficult, perpetuating contested coexistence while deferring fundamental questions about religious authority in the algorithmic age.

What Hannah Arendt observed about bureaucratic machinery — that evil could arise not from monstrous intention but from the unthinking execution of ordinary processes — carries an unsettling resonance. The AI systems distorting sacred texts are not designed with malice. They are simply doing what they were built to do: predict the most probable next word. The danger lies in the gap between that mechanical operation and the human communities that have entrusted their most ancient texts to its care.

The fatwa from Cairo, the demotion of Father Justin, the disclosure from YouVersion, and the multifaith task force from Rome all point to the same recognition: some boundaries cannot be drawn by algorithms. Whether international law will draw them remains an open question.

Endnotes

[1] AAJ TV, “Egypt’s Dar al-Ifta Issues Fatwa on AI Quranic Interpretation,” January 2026. https://aaj.tv

[2] Gulf News, “Grand Mufti Nazir Ayyad: AI Lacks True Comprehension of Quranic Text,” January 2026. https://gulfnews.com

[3] Middle East AI News, “Dar al-Ifta Fatwa: A Significant Ruling with Global Implications,” January 2026. https://middleeastainews.com

[4] U.S. Catholic, “Father Justin Chatbot Demoted After Sacramental Error,” 2025–2026. https://uscatholic.org

[5] Christian Daily International, “YouVersion CEO Discloses 15–60% Error Rate in AI Biblical Quotes,” March 2026. https://christiandailyinternational.com

[6] Answers in Genesis, “Every Word and Punctuation Is Meaningful to Scripture Translation,” March 2026. https://answersingenesis.org

[7] AI and Ethics (Springer Nature), “Detecting Doctrinal Flattening in AI Generated Responses,” March 2026. https://springer.com

[8] The Bible Society, “AI Chatbot Interpretive Failures Across Christian Traditions,” January 2026. https://biblesociety.org.uk

[9] The Tablet, “Confessional Language as Design Feature: AI Chatbot Bias Analysis,” January 2026. https://thetablet.co.uk

[10] Deseret News, “Rome Summit on Ethics and Artificial Intelligence: Multifaith Task Force Announced,” 2026. https://deseret.com

[11] United Nations, Universal Declaration of Human Rights, Article 18, December 1948. https://www.un.org/en/about-us/universal-declaration-of-human-rights

[12] United Nations, International Covenant on Civil and Political Rights, Article 27, December 1966. https://www.ohchr.org/en/instruments-mechanisms/instruments/international-covenant-civil-and-political-rights

[13] United Nations, Declaration on the Rights of Persons Belonging to National or Ethnic, Religious and Linguistic Minorities, A/RES/47/135, December 1992. https://www.ohchr.org/en/instruments-mechanisms/instruments/declaration-rights-persons-belonging-national-or-ethnic

[14] OHCHR, “Special Rapporteur on Freedom of Religion or Belief: Mandate and Contact.” https://www.ohchr.org/en/special-procedures/sr-religion

[15] OHCHR, “Human Rights Council: Resolutions on Minority Rights.” https://www.ohchr.org/en/hr-bodies/hrc

[16] OHCHR, “Universal Periodic Review: Mechanism and Submissions.” https://www.ohchr.org/en/hr-bodies/upr

[17] Arendt, Hannah. Eichmann in Jerusalem: A Report on the Banality of Evil. New York: Viking Press, 1963.